🚀 I got my doctoral degree degree from Southern Methodist University (SMU) on November 18, 2025. I am the first and also currently the only person in SMU history to complete the Ph.D. program in just two years! Prior to that, I got my master's degree from the Chinese University of Hong Kong (CUHK) in 2022. And I obtained the bachelor's degree from University of Electronic Science and Technology of China (UESTC) in 2020.

✨✨✨ I am currently on the job market and welcome any opportunities or discussions. Please feel free to reach out if there is a potential fit. ✨✨✨

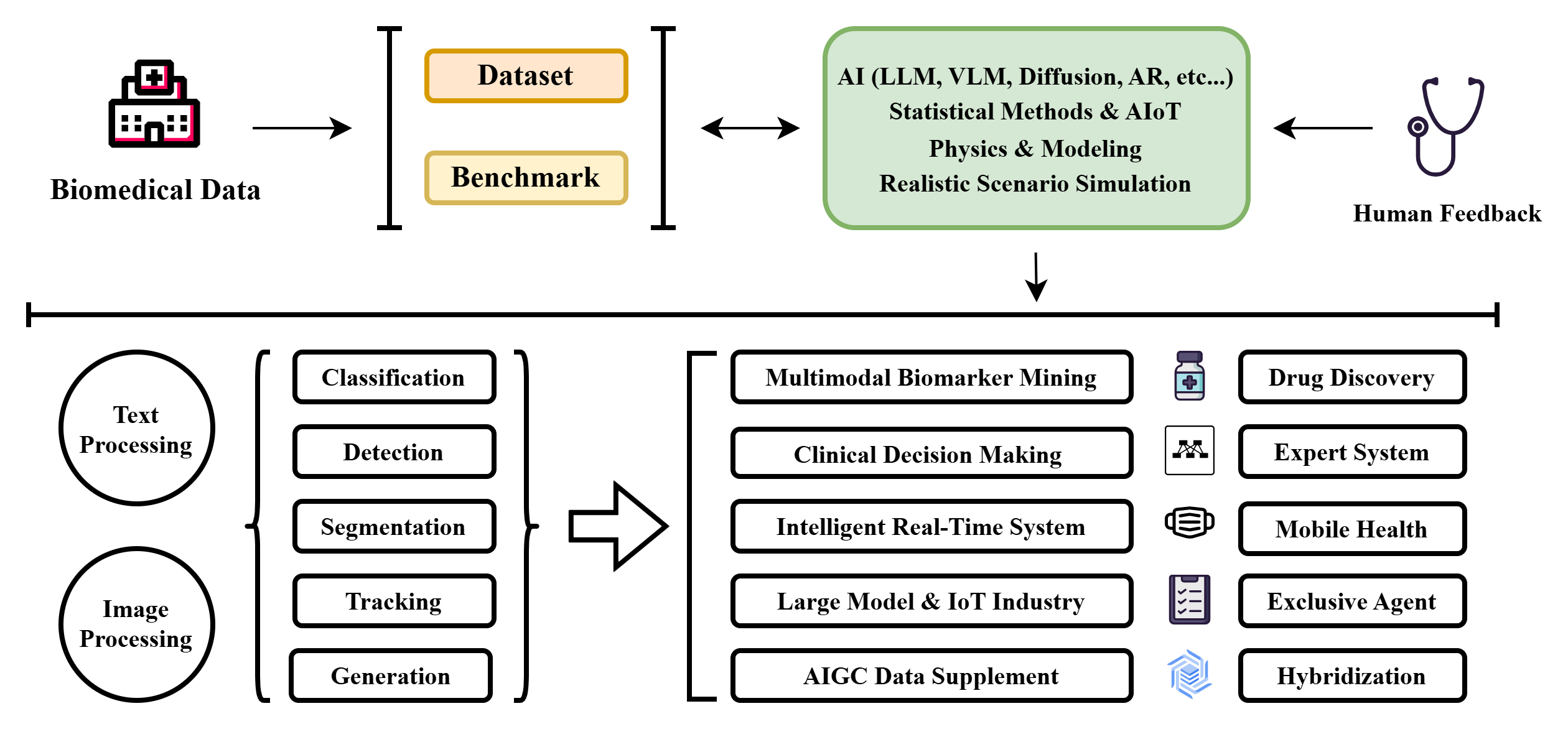

My research interests mainly focus on developing biomarker-driven multimodal AI systems for clinical decision support and disease progression modeling, specifically: Medical AI -> Developing multimodal models for diagnosis and clinical decision support, integrating diverse medical data.

-

By the way, I also conduct research in the following areas:

- AI for Finance: Designing data-centric models for risk analysis and anomaly detection, leveraging large-scale structured and temporal financial data.

- Neuromorphic Computing: Exploring next-generation computing paradigms to enable efficient and scalable intelligent systems.

My long-term vision is to advance AI for Health Care by building clinically grounded, trustworthy, and multimodal intelligence systems. I frame this mission through the Hippocrates paradigm:

Research Map: The Schematic Overview of My Research Vision

News

- [2026/05] 4 papers was accepted by IEEE PRAI!

- [2026/04]🔥🔥🔥 3 papers was accepted by IEEE 48th EMBC!

- [2026/01]🔥🔥🔥 1 paper was accepted by IEEE 51st ICASSP!

- [2026/01] 1 paper was accepted by BNNIO 2026!

- [2025/11]🥳🥳🥳 I successfully defended Ph.D. dissertation.

- [2025/11]🥳🥳🥳 I have been nominated to attend the Nobel Prize and its conference!

- [2025/10]🔥🔥🔥 We have just released the survey: Tibetan Language and AI: A Comprehensive Survey of Resources, Methods and Challenges.

- [2025/09]1 paper was accepted by IEEE 25th BIBE!

- [2025/08]🚀🚀🚀 1 paper was accepted by EMNLP 2025!

- [2025/07]🔥🔥🔥 1 paper was accepted by ICONIP 2025!

- [2025/05]🥳🥳🥳 I was selected as the outstanding graduate student of the Department of computer science!

- [2025/05]🥳🥳🥳 I passed my mid-term PhD defense!

- [2025/04]🔥🔥🔥 2 papers are accepted by IEEE 47th EMBC

- [2025/03]1 paper is accepted by IEEE Journal of Systems Engineering and Electronics!

- [2024/11]🥳🥳🥳 I have passed my Ph.D. qualifying exams and am now a Ph.D. candidate rather than a Ph.D. student.

- [2024/11]1 paper is accepted by IEEE ICNC 2024.

- [2023/12]🔥🔥🔥 2 papers are accepted by AAAI 2024 and its Workshop.

- [2023/12]1 paper is accepted by Applied and Computational Engineering and is selected as the cover paper.

- [2023/08]🚀🚀🚀 Book "Neuromorphic Circuits for Nanoscale Devices" has been published (ISBN: 9787111704119).

- [2023/07]🔥🔥🔥 1 paper is accepted by IEEE ICTAI 2023.

- [2022/12]I have officially graduated with my master's degree!

- [2022/12]1 paper is accepted by Biomedical Signal Processing and Control.

- [2022/05]🚀🚀🚀 1 paper is accepted by IEEE Transactions on Instrumentation and Measurement and is selected as the cover paper.

- [2021/06]🚀🚀🚀 2 papers are accepted by IEEE PRAI 2021 and both of them won the Excellent Presentation Award.

- [2020/08]1 paper is accepted by IEEE CDS 2020.

- [2020/07]1 paper is accepted by IEEE ICVRV 2020.

- [2020/07]1 paper is accepted by ACM ICRAI 2020.

- [2020/06]I have officially graduated with my bachelor's degree!

- [2020/06]🥳🥳🥳 My undergraduate thesis was selected as an Outstanding Undergraduate Thesis (5/710).

- [2020/05]🔥🔥🔥 1 paper is accepted by IEEE 20th ICCT 2020.

Education

Advisor: Prof. Jia Zhang Research Direction: AI for Diagnosis & Treatment of Glaucoma based Biomarker

Advisor: Prof. John Kar-Kin Zao Research Direction: AIoT for Public Health

Advisor: Prof. Yongbin Yu Research Direction: Medical Imaging in Skin Lesion

Work & Research

Collaboration: Dr. Yadi Liu and Jingxi Qiu Research Direction: AI for Finance

Advisor: Dr. Tsengdar Lee Research Direction: Medical AI

Advisor: Dr. Karanjit Kooner & Prof. Jui-Kai Wang Research Direction: Medical Imaging in Glaucoma

Supervisor: Prof. Michael Hahsler

Advisor: Prof. Huazhong Yang & Prof. Lu Zhang Research Direction: AI Chips Design, Electronic Design Automation

Project: Remote Sensing

Project: 5G Station Development

Selected Publications

* Equal Contribution, † Corresponding Author

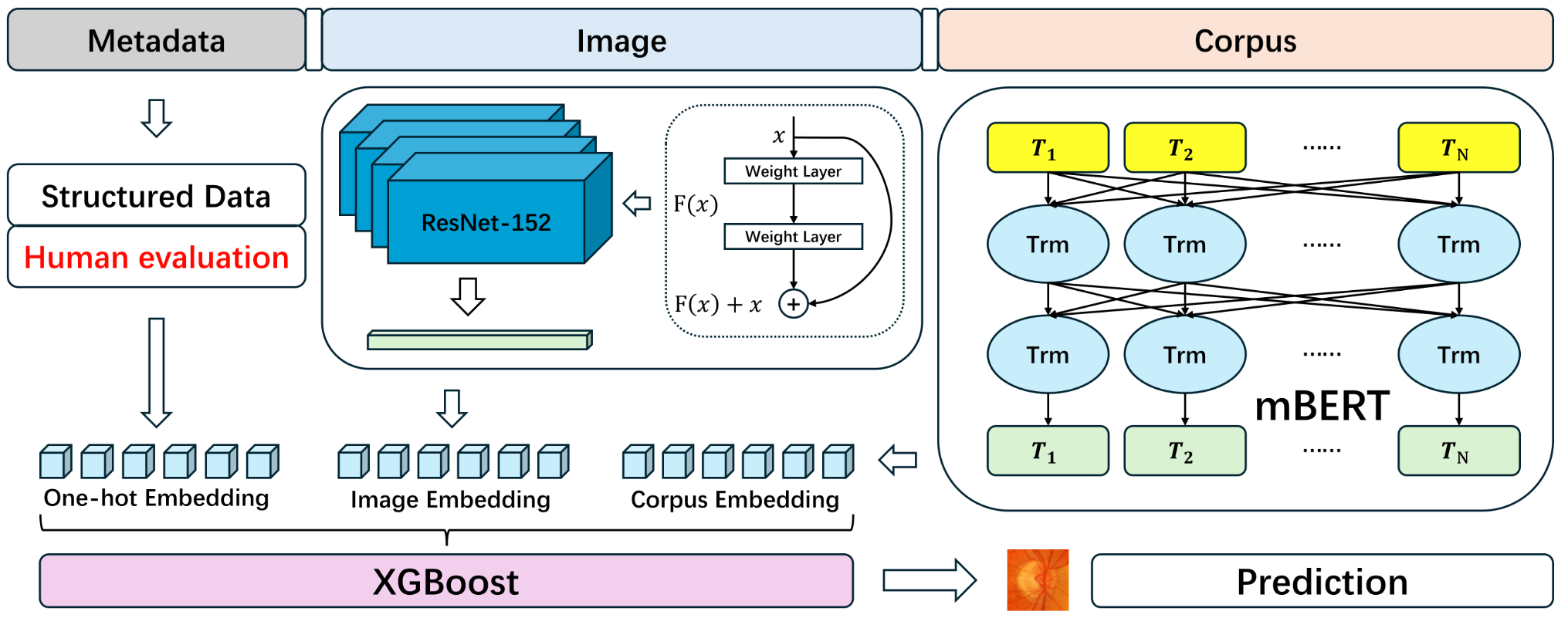

Area: Glaucoma, Ophthalmology AI, Data Mining

GlaBoost is a multimodal framework for glaucoma risk prediction that integrates structured clinical data, fundus image embeddings, and expert textual descriptions into a unified feature space.

It leverages pretrained visual and language encoders alongside an enhanced XGBoost classifier to achieve high predictive performance, reaching 98.71% validation accuracy on real-world datasets.

Importantly, its feature importance analysis aligns with clinical knowledge, offering an interpretable and scalable solution for glaucoma diagnosis.

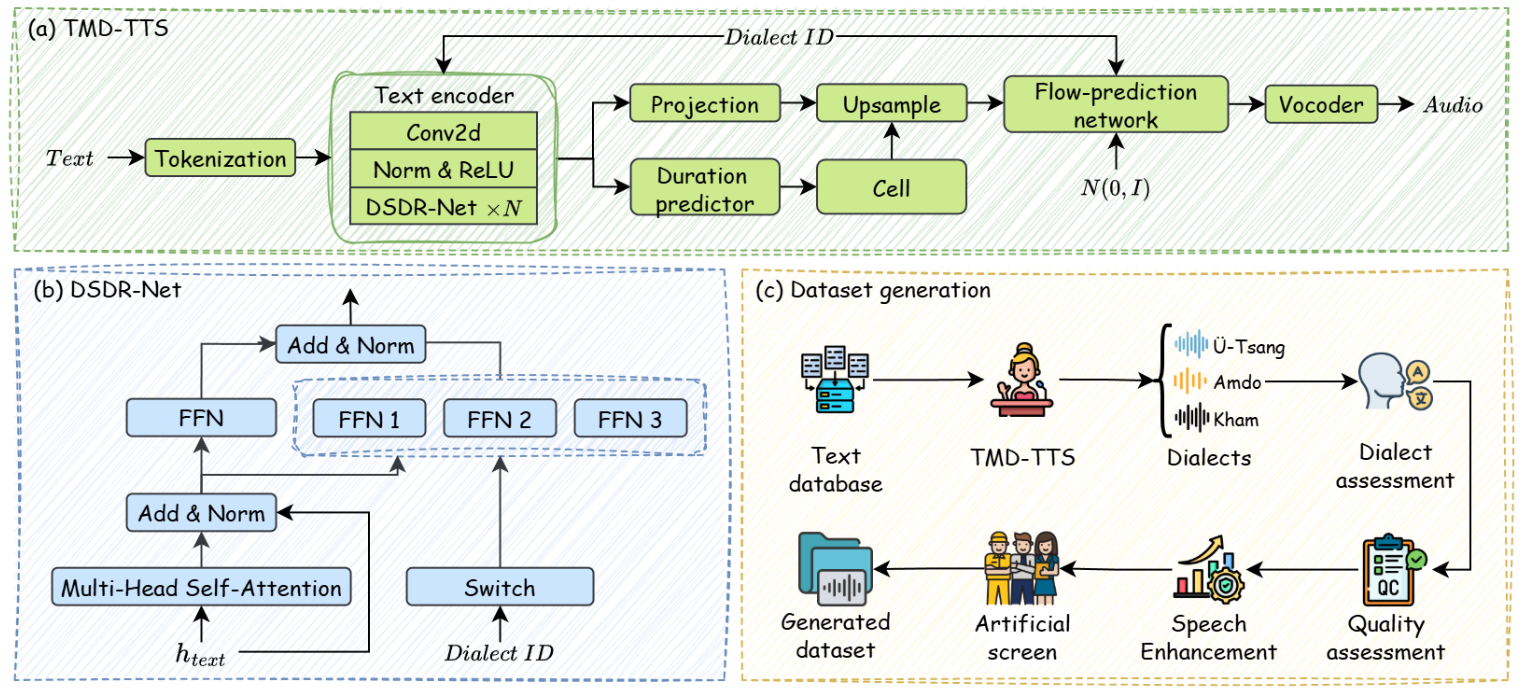

Area: Low Resource Language Processing, Text to Speech, Generative AI

Tibetan speech modeling is constrained by limited parallel corpora across major dialects, hindering multi-dialect synthesis.

To address this, we propose TMD-TTS, a unified framework that generates parallel dialectal speech from explicit dialect labels.

By modeling fine-grained acoustic and linguistic variations, TMD-TTS significantly improves dialectal expressiveness and enables high-quality speech generation across Tibetan dialects.

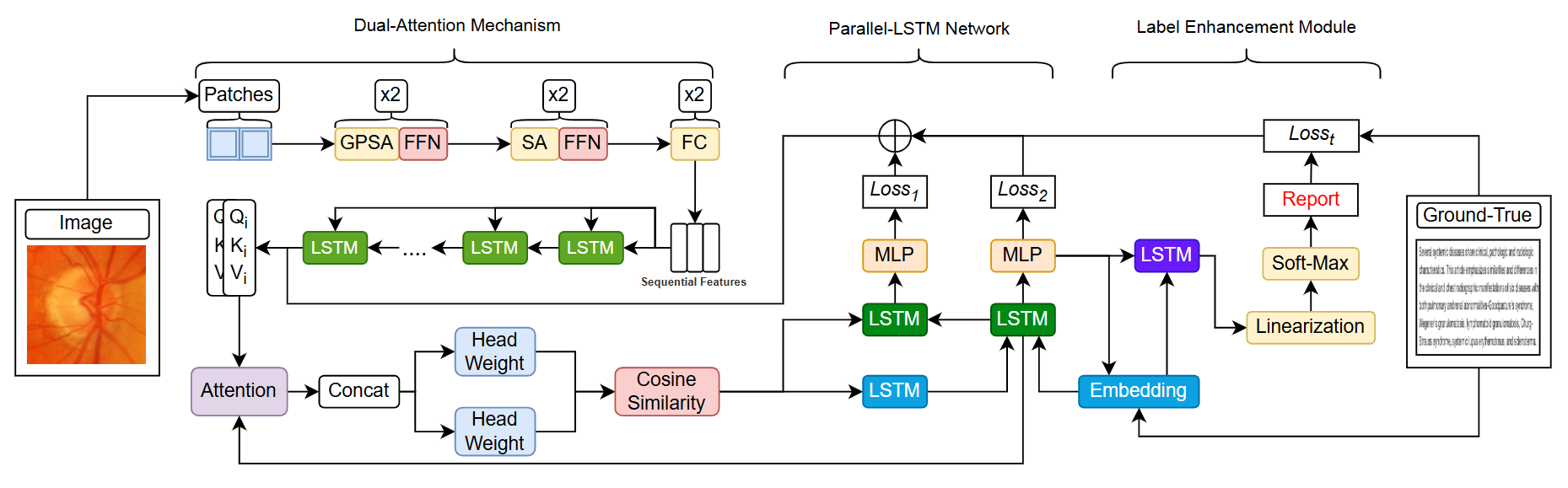

Area: Glaucoma, Ophthalmology AI, Medical Report Generation

Existing methods for glaucoma report generation suffer from redundant narratives and insufficient emphasis on clinically critical features.

To address these limitations, we propose DA-SPL, a dual-attention multimodal framework that improves cross-modal representation and pathology-aware description.

It enables accurate extraction of subtle disease patterns and generates clinically consistent diagnostic reports with superior performance.

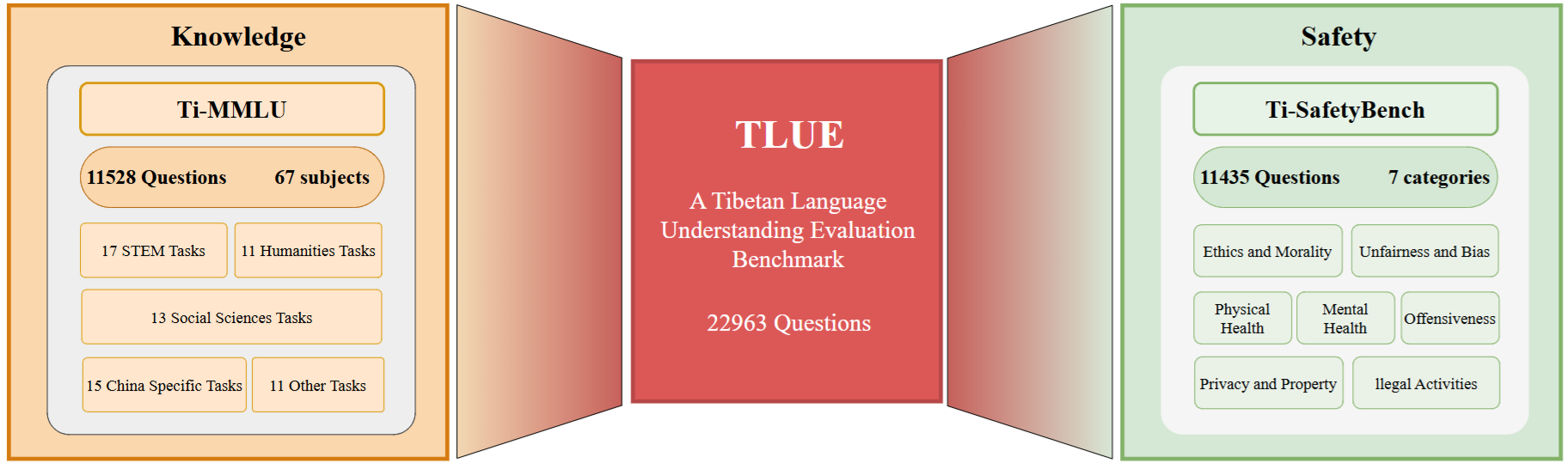

Area: Low Resource Language Processing, Large Language Model, Benchmark

Due to the lack of standardized evaluation in Tibetan NLP, existing large language models cannot be reliably assessed or compared, particularly in reasoning and safety-critical scenarios.

To address this, we propose TLUE, the first unified benchmark for Tibetan LLMs, which enables consistent, reproducible, and comprehensive evaluation.

By resolving fragmented and inconsistent evaluation practices, TLUE establishes a foundation for developing reliable and culturally aligned language models in low-resource settings.

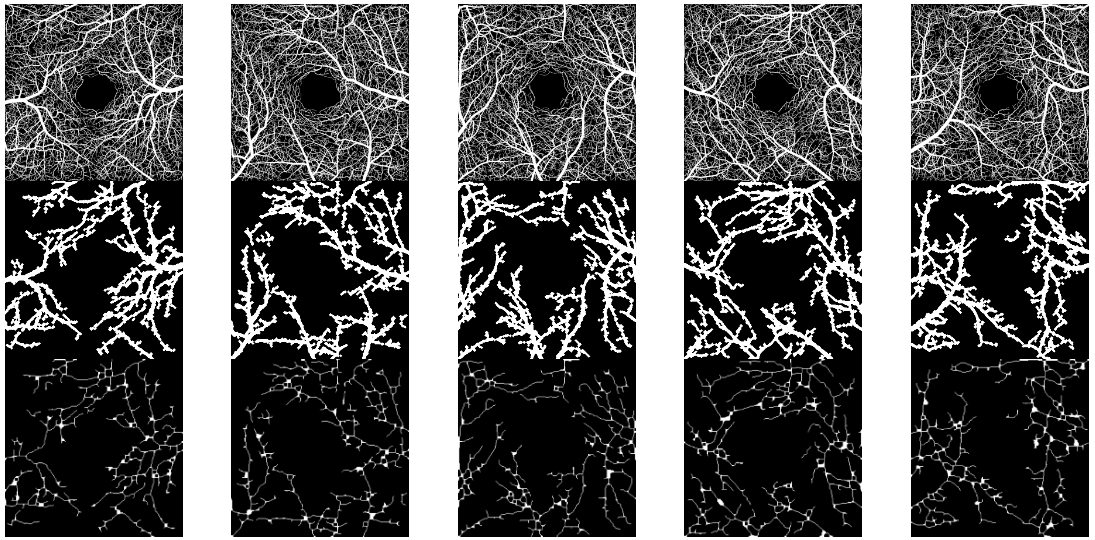

Area:Glaucoma, Ophthalmology AI, Medical Report Generation

Retinal vessel analysis in OCTA is critical for understanding glaucoma progression, yet existing methods struggle with complex vessel structures and rely heavily on large labeled datasets.

To address this, we propose VeinCluster, an unsupervised segmentation algorithm that extracts major vessels and vascular nodes from OCTA images using pixel density-based modeling.

Without requiring extensive annotations or high computational resources, VeinCluster achieves accurate and interpretable vessel segmentation, outperforming existing methods. It further enables downstream analysis of blood flow patterns and supports glaucoma progression modeling.

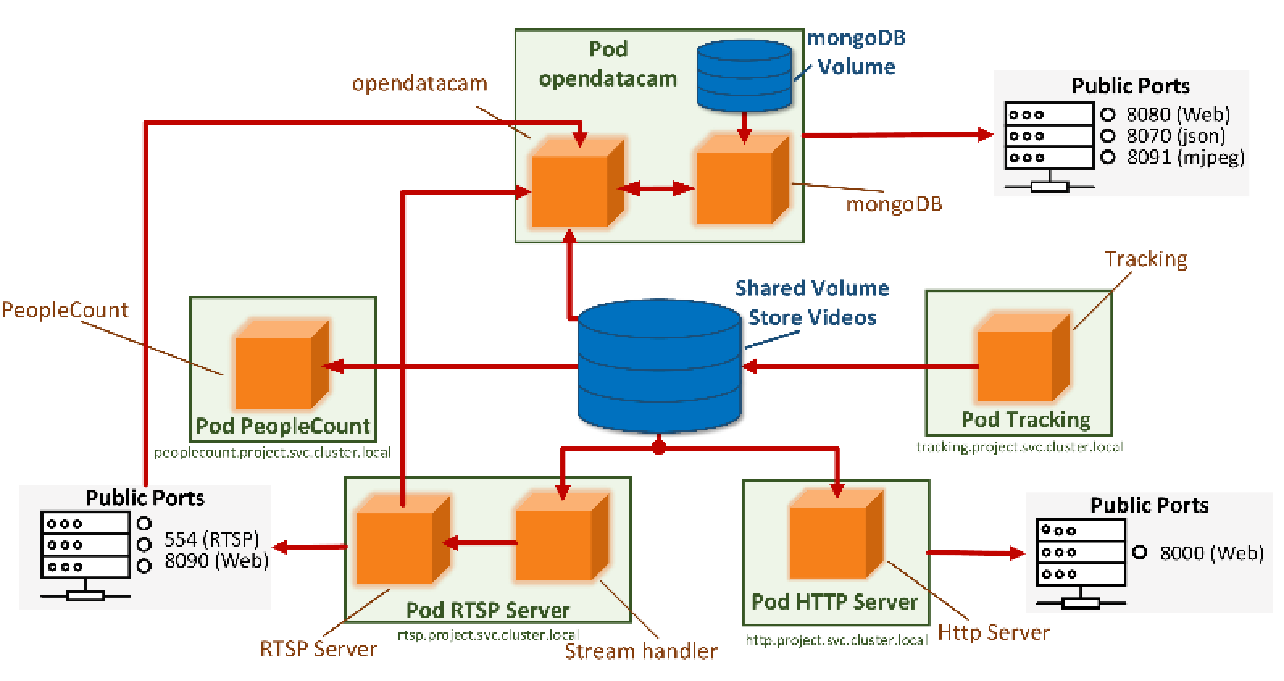

Area: AIoT for Public Health, YOLOv5, Mask Detection

IoT-based deep learning systems are often constrained by limited bandwidth and computational resources, leading to latency and deployment challenges.

To address this, we develop an improved lightweight YOLOv5 framework for efficient edge-side applications, including mask detection, vehicle counting, and target tracking.

By optimizing model efficiency and deploying via Docker and Kubernetes, the system achieves faster inference, reduced storage requirements, and seamless edge–cloud interaction.

This enables real-time, scalable, and resource-efficient intelligent services in IoT environments.

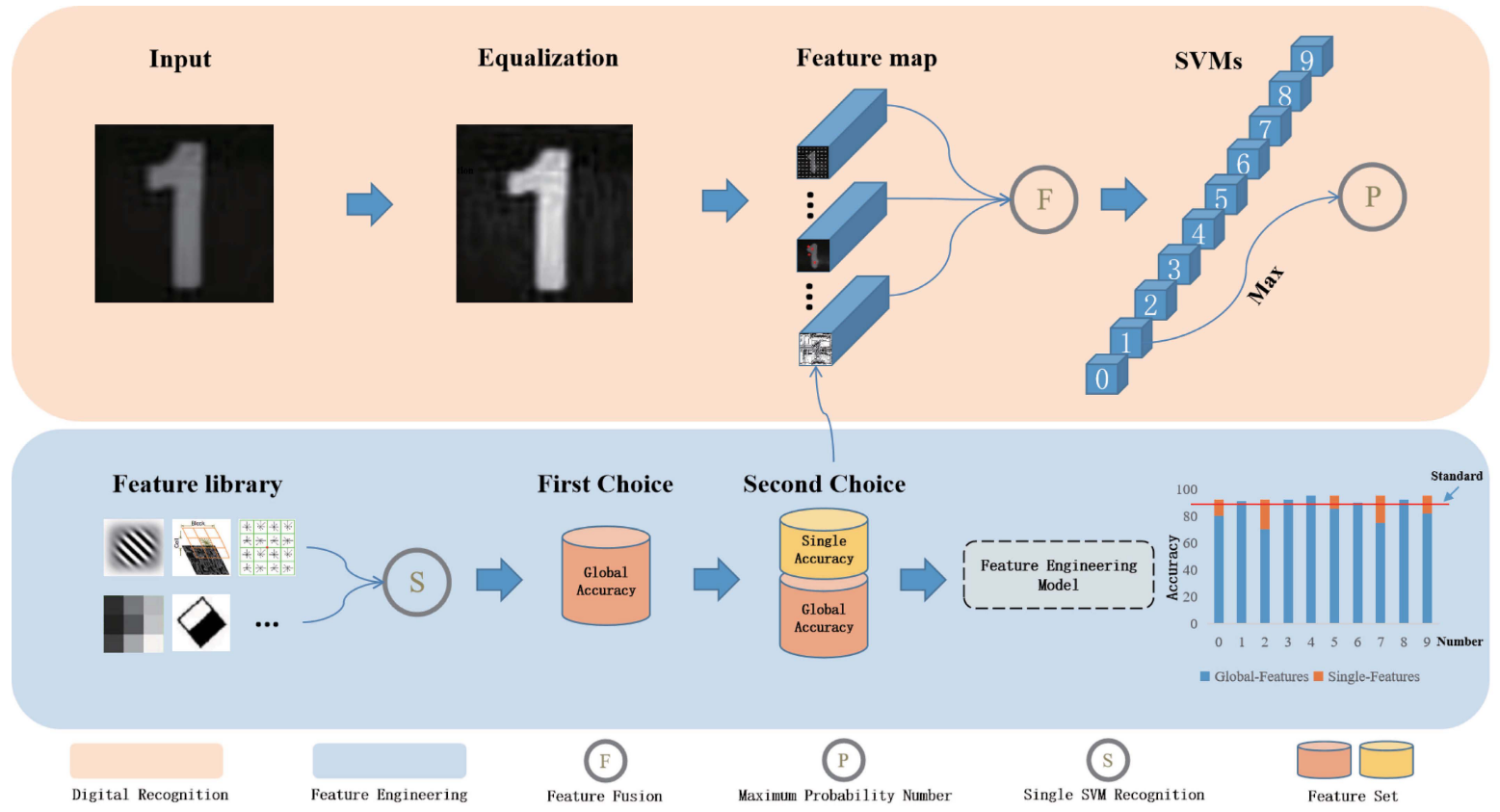

Area: Resource-Efficient AI, Support Vector Machine, Meter Detection

Existing meter recognition methods rely heavily on deep learning and large-scale data, limiting their effectiveness in small or occluded datasets.

To overcome this, we propose MC-FE, a feature-driven multi-classifier framework that adaptively selects discriminative features, along with ML-KRP for precise localization.

The approach enables robust and accurate recognition without large-scale data, outperforming state-of-the-art methods in small-data scenarios.

Interview & Talk

Location: Building 16, No. 10 Xibeiwang East Road, Beijing 100193, China (SoftStone Group Headquarters)

Location: East Tower, Building 1, No. 6 Weigongcun Road, Haidian District, Beijing 100081, China (Ant T Space)

Location: Hangyu Building, No. 20 Financial Street, Beijing 100032, China (E-Fund Management Co., Ltd.)

Location: 225 North Avenue NW, Atlanta, GA 30332, USA (Georgia Institute of Technology)

Academic Service

- Committee: IEEE PRAI'25, AAAI'26

- Conference Reviewer: CVPR'25/26, MICCAI'25/26, ICME'25/26, IEEE PRAI'25, IEEE BIBE'25, ICONIP'25, IEEE EMBC'26, AAAI'26

- Journal Reviewer: IEEE Journal of Translational Engineering in Health and Medicine, American Journal of Diagnostic Imaging, Journal of Computer Sciences and Informatics, The Journal of Supercomputing, Digital Health

Principal Investigator

Project: Federated Learning Alignment Method under Multi-Type Data Distribution (2022KQNCX084)

Category: Guangdong Province Higher Education Youth Innovation Talent Project - Natural Science

Role: Co-PI, with Dr. Siyang Jiang

Description: A study on developing alignment methods in federated learning to address challenges posed by heterogeneous data distributions across clients, including differences in features, labels, and modalities.

Survey & Benchmark & Dataset

- [Tibetan AI] The First Comprehensive Survey about Tibetan Language and AI

- [TLUE] The First Tibetan Language Understanding Evaluation Benchmark

- [TIB-STC] The First and the Most Comprehensive Dataset for Large Language Model Understanding

- [TIBSTC-CoT] The First Dataset for Large Language Model Thinking and Reasoning

- [IFD] The First and Largest Financial Crime Detection Dataset

- [RetinaMix] The high-resolution OCTA dataset for glaucoma retina combining 2D and 3D methods

→ Survey:

→ Benchmark:

→ Dataset:

Personal Interests

- Anime: Dragon Ball series

- Hobby: Skiing, Fitness, Off-Roading, Hunting, Traveling

- Motto: 我还在寻找属于我自己的那朵花...至少现在是这样的。